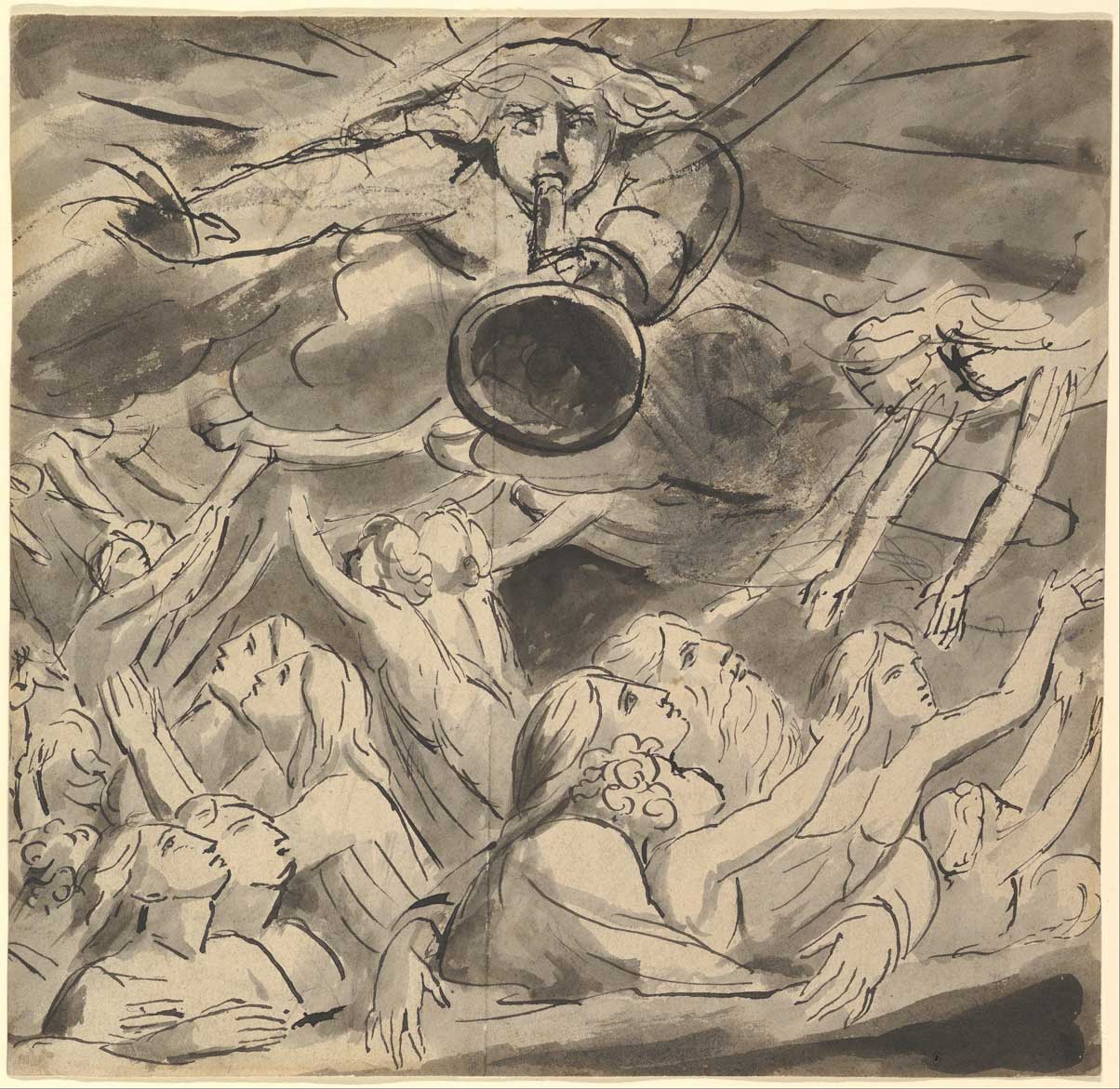

Am I Going to Heaven When I Die?

I’ve always believed that Christians go to heaven when we die. It’s a staple of many people’s faith. Where did that doctrine come from, and what does the Bible say about heaven? Join me as I survey the various verses that talk about heaven.

Am I Going to Heaven When I Die? Read More »